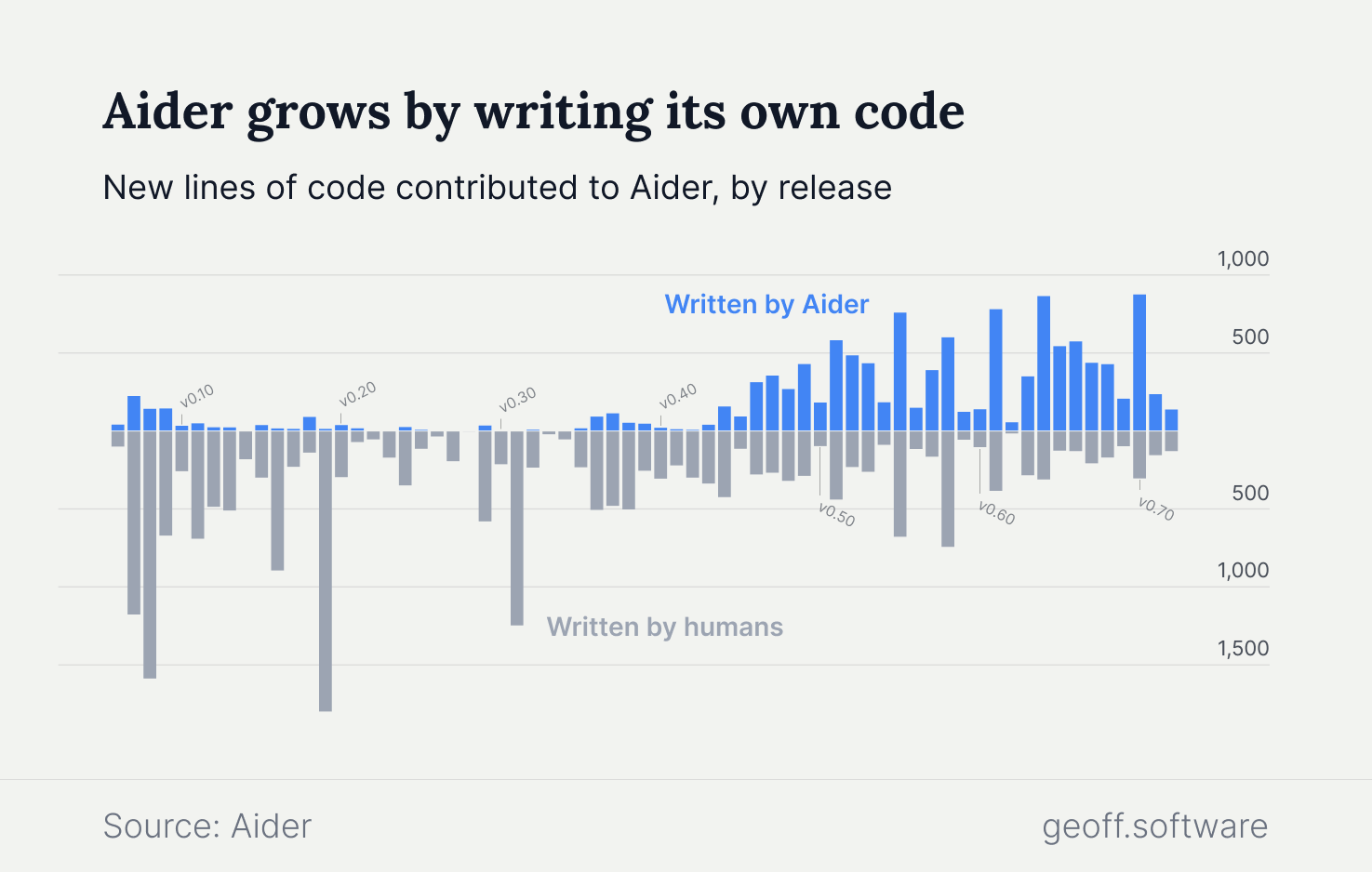

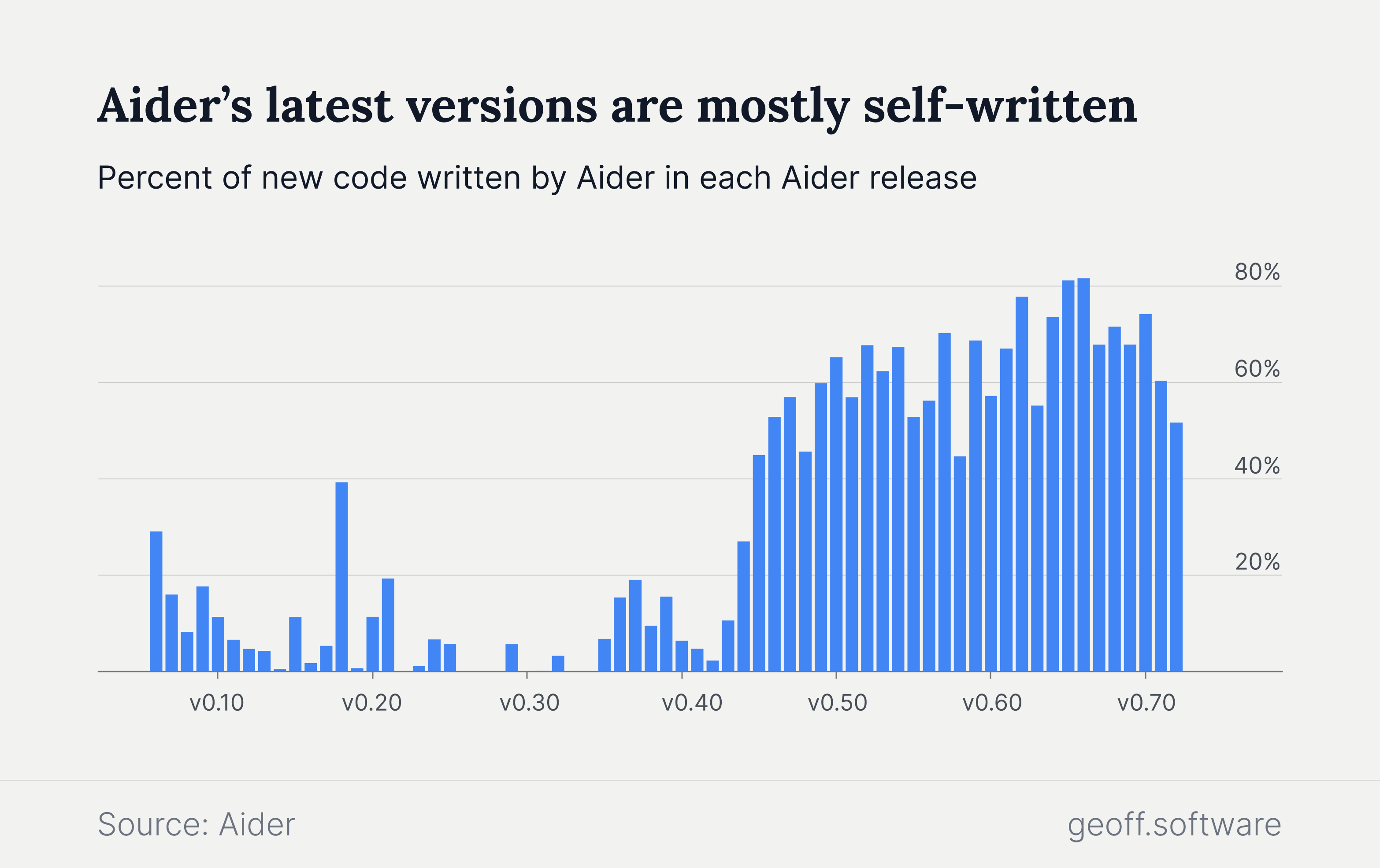

Developers have no shortage of AI tools from which to choose. There are dozens of AI copilots and assistants that can autocomplete code or answer questions as developers do their work. But there is a growing interest in cutting-edge coding agents — deeply powerful, semi-autonomous AI tools that can reason about and make complex changes to an entire project. Aider, one of the more popular agents, is now so advanced that it is writing most of its own codebase.

Developers chat with Aider using natural language, which it then uses to plan and make changes to the codebase. With a single prompt, it can make meaningful changes across files and functions. For more complex problems, developers can use the "ask" command to first walk through potential solutions. Following up with a simple "go ahead" tells Aider to start implementing its proposal.

Aider connects to most LLMs, like Claude and DeepSeek, rather than rely its own proprietary model. But it does the hard task of guiding those LLMs to turn commands into functioning code changes. Much of the magic lies in how code, documentation, and context are packaged up for LLMs to parse.

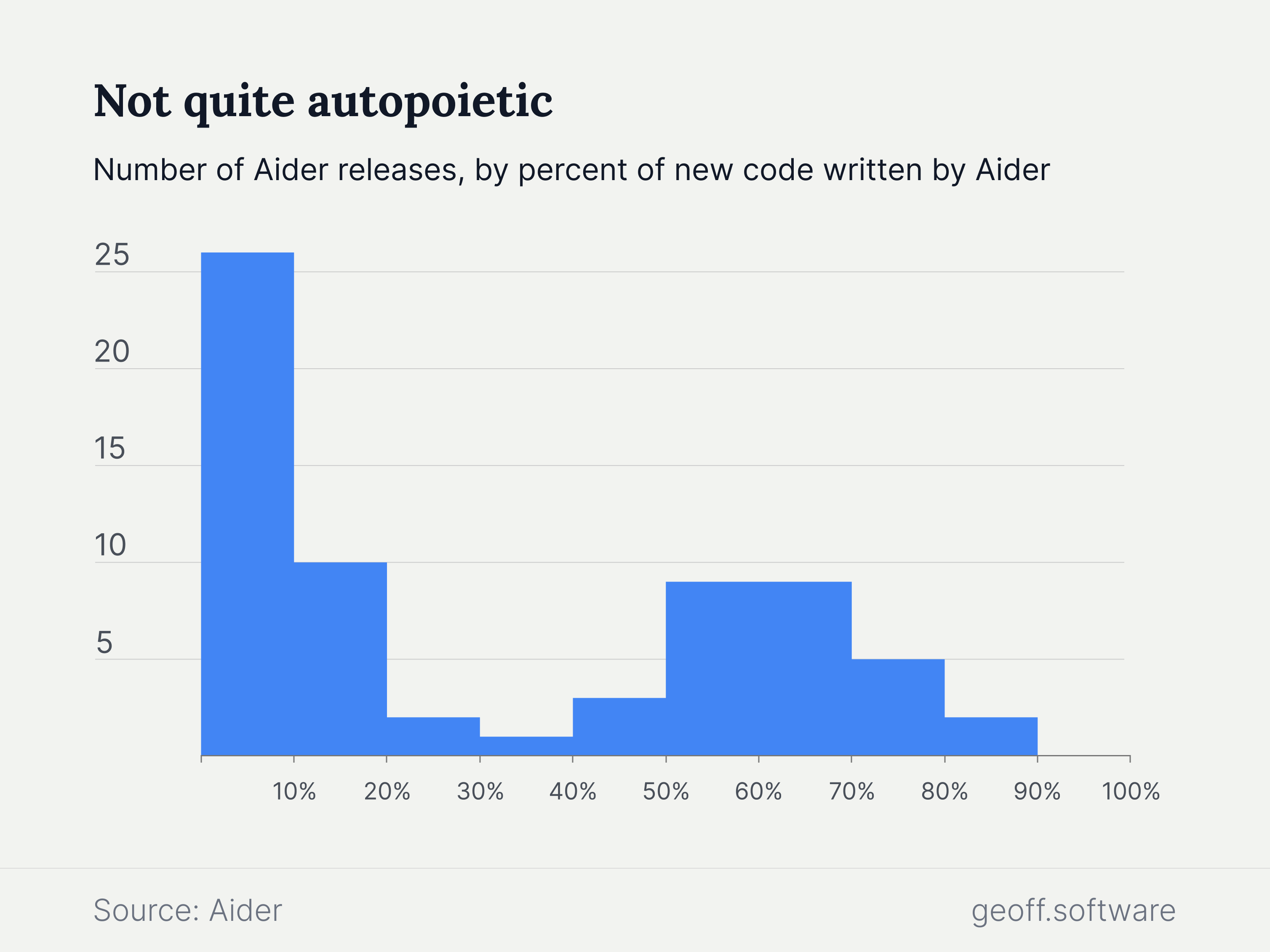

Aider is not yet fully autonomous. No release has been entirely written by AI. But when used, it tends to do most of the coding. Aider is more likely to contribute between 50% and 70% of new code than 20% to 40%. Such usage hints at the potential workload that AI coding agents can bear in the future.

While some professions are shy about how much they rely on AI for their work, the team behind Aider is transparent. Aider marks commits it authors with a tag, making it easy to see how much code it generates. The data is then used to calculate the amount of human and AI-generated code in each release, all of which is tracked in Aider's documentation.

Aider's openness reflects an industry trend toward improving observability around how developers are using AI tools. Other companies are starting to provide their own data. GitHub Copilot offers teams access to telemetry data, including the number of suggestions accepted and rejected. Codeium, the company behind Windsurf, gives customers a usage dashboard with extrapolated data like hours saved and percent of code written.

For engineering teams adopting AI tools, better data will be vital to understanding their true impact. Lines of code, autocompletions, and suggestions tell a small part of the story. There are plenty of unanswered questions about how that assistance translates to productivity and quality improvements. Perhaps Aider can build itself a new feature to answer those questions as well.